In the first part of this 3-part blog series, I explained why clean rooms are useful. In part two, I went deeper into what “anonymous” data truly is under UK GDPR. I’ll conclude this installment by explaining some techniques that can be used to maintain anonymity.

How can Anonymisation be achieved?

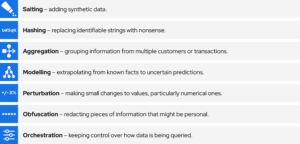

There are a host of techniques that can be used to ensure the anonymity of data. We already touched on a couple of them in part 2, but here is a more comprehensive list:

I didn’t set out for this to spell SHAMPOO; that just happened. Maybe anonymisation is like washing your hair: one should do it at least 3-4 times a week. No? Ok, moving on…

Responsible data users likely employ some or all of these methodologies in various forms, but it makes a massive difference where and how in the data flow these techniques are applied. Do them in the wrong order or too aggressively, and you undermine the whole purpose and value of the data.

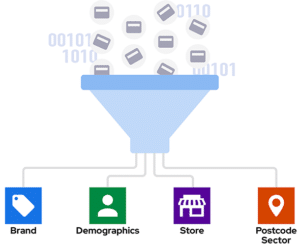

Most consumer purchase insights depend on aggregation:

This grouped data is definitely useful, but it has its limitations. What if a retailer wants to:

- Categorise transactions according to their own taxonomy?

- Create a custom panel of customers who spend in the category once a month?

- Cut the data based on a different demographic split?

- Model out from this data to a larger universe?

- Filter to include only their existing customers and add their own break-out?

To do any of these things, they would need to go back to the source data and re-run the report or model from more granular data. If they can’t access and query that granular data, they would need someone to do that for them – every single time they have a new question, a new business problem to solve, a new audience they want to target, or a new campaign they want to measure.

So how can data providers preserve the granularity of the data and empower their clients, whilst keeping the data anonymous?

Why clean rooms pass the Motivated Intruder Test

Maintaining granularity longer in the process—such as delaying aggregation—allows retailers and advertisers greater flexibility in extracting value from data sources. However, leaving that decision entirely up to the clients can cause data providers to violate the “motivated intruder” test.

A key consideration under the motivated intruder test is the “type of data release”. This is kind of obvious: as the ICO explains, if you publish the data openly on the web, your exposure is much higher than if you just share it with one person:

“With release to defined groups, you should consider in your identifiability risk assessment what information and technical know-how is available to members of that group. Contractual arrangements and associated technical and organisation controls play a role in the overall assessment. Fewer challenges may arise than with public release.”

But the “type of data release” isn’t just about the people you’re sharing with. It’s also about the methodology used to make the data available, as the guidance continues:

“This is particularly the case if you retain control over who can access the data and the conditions in which they can do so. Designing these access controls appropriately will help reduce identifiability risk and potentially allow you to include more detail, while continuing to ensure effective anonymisation. Data access environments can help you retain control over the information.”

What is a data clean room, if not a type of “data access environment”? Snowflake describes it as follows in their documentation:

“Data clean rooms offer a secure way to gain valuable insights while protecting sensitive information. They allow you to combine and analyze data from different parties with privacy-preserving configurations that help protect the underlying data.”

For example, instead of aggregating transaction data up into groups before making it available (and thereby undermining its value, as explained above), data providers can apply an aggregation policy to a table in the data clean room. This allows clients, or data “consumers”, to query the transaction data before it’s been aggregated, but only to extract outputs based on, say, five or more customer IDs. Those outputs can’t be traced back to an individual, so they remain anonymous even in the client’s hands.

So clean rooms allow data consumers to query granular data without the need for it to be copied or transferred to the consumers’ systems. The act of copying or transferring to a system the data provider cannot control would mean that both the data provider and consumer would have to treat it as personal data. But, by virtue of keeping it within an environment that only the data provider controls, it remains anonymous.

This works for modelling too. For instance, within a clean room, clients can combine data they hold (e.g. CRM) with third party data to predict the likelihood of their customers shopping with their competitors.

Data providers can use the clean room’s constraints to ensure that this model can’t output known facts about what customers have done in the past (e.g. that a known McDonald’s customer went to Burger King – see the example I gave in part 2 of this blog series), only probabilistic predictions about the future (e.g. a known McDonald’s customer has similar attributes to people that may go to Burger King).

Assuming McDonald’s has the proper consent to reach the known customer, they can send them a thoughtfully designed offer to encourage them to choose McDonald’s over Burger King in the future.

None of this is a replacement for a sound consent framework underpinning targeting activities. But it means marketers can access much more powerful, accurate and flexible targeting options as part of their strategy, measure whether or not campaigns are working and then fine-tune on the fly – all without having to rely on someone else to build everything for them.

As Affinity’s Chief Marketing and Commercial Officer, Damian Garbaccio, recently explained in Adage, “Continuous optimization no longer a tactic – it’s the strategic advantage that separates leaders from laggards.”

So, finally returning to the question posed at the very start of this blog series: when are data clean rooms an “ad tax”, and when do they add value?

| Ad Tax | Added Value |

| Simple joins & appends | Directly query granular source data |

| Pushing audiences into platforms | Creates custom models and reports |

| Engenders trust and simplifies operations, but not necessarily the only solution. | It is the only way to maintain anonymity when working with granular data. |

If you want to access the full potential of consumer purchase insights, please get in touch by filling out the form below.